Open Source Arize Phoenix Alternative? Arize Phoenix vs MLflow

Arize Phoenix and MLflow are open source platforms that help teams build production-grade AI agents and LLM applications. In this guide, we compare Phoenix's tracing-focused approach with MLflow's complete AI engineering platform and help you decide which is the right fit.

What is Arize Phoenix?

Arize Phoenix is an open source AI observability and evaluation tool designed for LLM and agent applications. Phoenix focuses on trace inspection, span-level debugging, annotation workflows, and experiment loops for prompt and quality iteration. It is especially popular with teams that want OpenTelemetry-native instrumentation and a dedicated trace-centric UI for LLM development. Phoenix OSS is primarily designed for local development and debugging, while Arize's commercial SaaS offering called Arize AX (the paid, hosted tier built on top of Phoenix), which targets production-scale deployments with online evaluations, the Alyx Copilot, and enterprise integrations.

What is MLflow?

MLflow is an open source AI engineering platform that enables teams of all sizes to debug, evaluate, monitor, and optimize production-quality AI agents, LLM applications, and ML models while controlling costs and managing access to models and data. With over 30 million monthly downloads, thousands of organizations rely on MLflow each day to ship AI to production with confidence.

Quick Comparison

Choose MLflow if you...

Choose MLflow if you...

- Care about avoiding vendor lock-in

- Want simple, flexible self-hosting with minimal operational overhead

- Need production-grade evaluation and prompt optimization for AI agents

- Want a unified solution for managing and governing access to LLMs via an AI Gateway

- Prefer a permissive Apache 2.0 licensing model

Choose Arize Phoenix if you...

Choose Arize Phoenix if you...

- Primarily need LLM tracing and basic evaluation workflows

- Prefer a SaaS-first experience

- Already have another solution for managing LLM access

- Are comfortable with ELv2 licensing tradeoffs

Open Source & Licensing

MLflow is an open source project backed by the Linux Foundation, a non-profit vendor-neutral organization, ensuring long-term community stewardship with no single company controlling its direction. MLflow is licensed under Apache 2.0 and maintains full feature parity between its open source release and managed offerings. With adoption by 60%+ of the Fortune 500, MLflow is one of the most widely deployed AI platforms in the enterprise.

Arize Phoenix is distributed under Elastic License 2.0. While Phoenix is free to self-host, ELv2 includes restrictions for offering the software as a managed hosted service. Arize is largely focused on their commercial SaaS offering (Arize AX), and some production capabilities such as online evaluations, the Alyx Copilot, and enterprise integrations are only available in the paid SaaS tier. Phoenix OSS is primarily designed for local development and debugging, while Arize AX targets production-scale deployments.

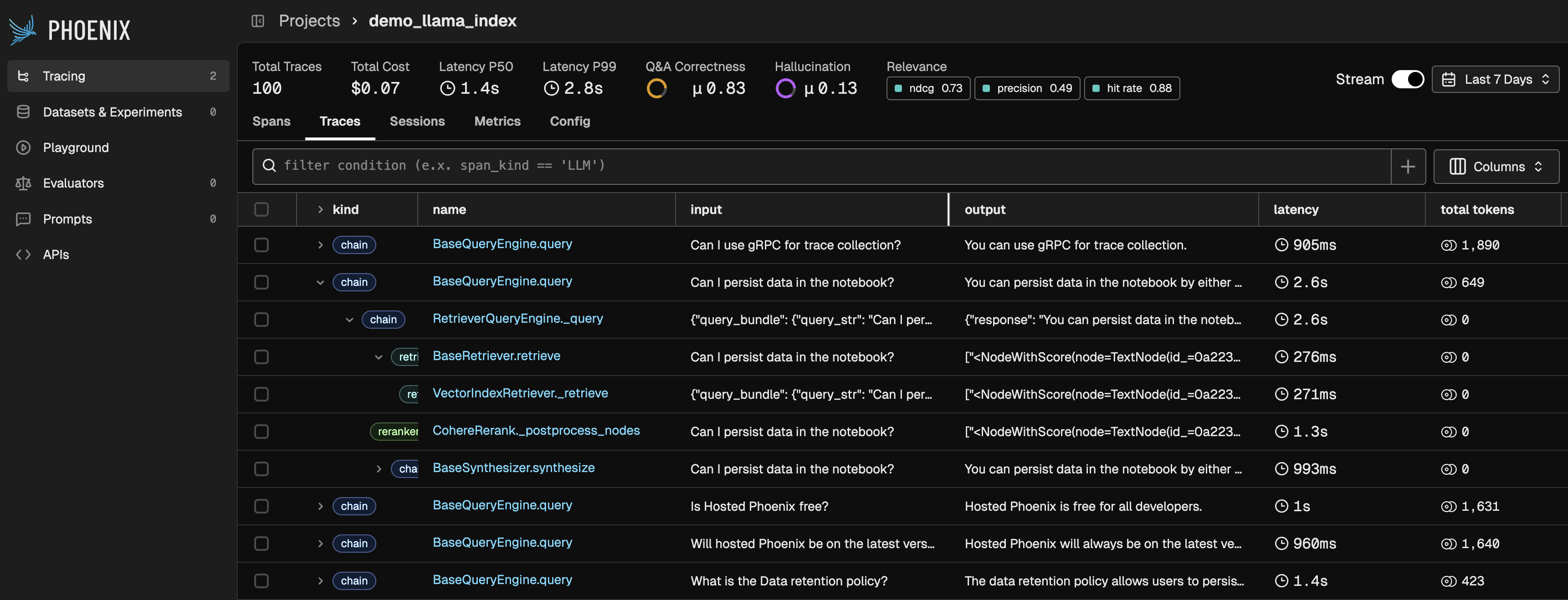

Tracing & Observability

Both platforms align around OpenTelemetry-compatible tracing. MLflow and Phoenix both support OpenTelemetry, but they differ in instrumentation ergonomics and production monitoring capabilities.

MLflow auto-instruments 30+ frameworks with a one-line unified autolog() API, including OpenAI, LangGraph, DSPy, Anthropic, LangChain, Pydantic AI, CrewAI, and many more. MLflow uses the native OpenTelemetry data model (Trace + Span + Events) and supports both OTLP ingest and export so teams can integrate with broader observability infrastructure. Teams also track token usage and cost to connect observability with spend.

Phoenix uses OpenInference/OpenTelemetry instrumentation with explicit tracer registration. The open source version of Phoenix does not offer online monitoring as part of its observability stack, so teams that need production monitoring must upgrade to the paid SaaS version.

MLflow

MLflowimport mlflowmlflow.langchain.autolog()# That's it. Chain and tool calls are# captured automatically.

Arize Phoenix

Arize Phoenixfrom phoenix.otel import registerfrom openinference.instrumentation.langchain import (LangChainInstrumentor,)tracer_provider = register(project_name="my-app")LangChainInstrumentor().instrument(tracer_provider=tracer_provider)# Continue running your app as usual

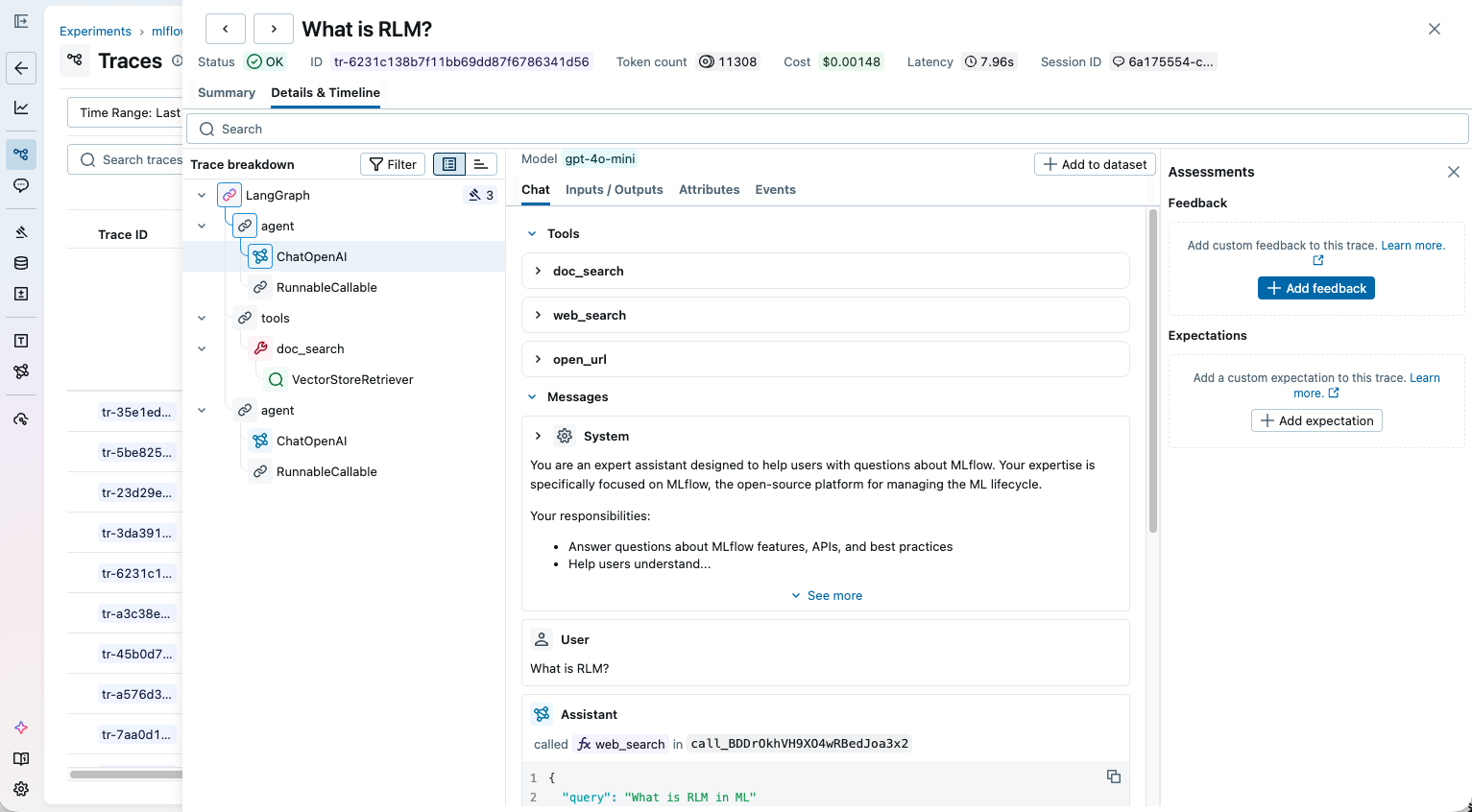

Evaluation & Experimentation

Evaluation is where the gap between MLflow and Arize Phoenix is most pronounced. Phoenix offers basic evaluation loops, such as inspecting traces, annotating outputs, building datasets, and running experiments, but high-value features such as online evaluations require the paid Arize AX tier, and the open source evaluation capabilities are less mature at scale.

MLflow provides production-grade evaluation backed by a dedicated research team. It supports a rich set of built-in scorers, integration with leading evaluation libraries (RAGAS, DeepEval, TruLens, Guardrails AI), and advanced capabilities like multi-turn evaluation, online evaluation, and aligning LLM judges with human feedback. If your team needs to move beyond vibe checks to rigorous quality assurance, MLflow is purpose-built for it.

| Capability | MLflow | Arize Phoenix |

|---|---|---|

| Built-in LLM Judges | ✅ | ✅ |

| Custom Metrics | ✅ | ✅ |

| Versioning Metrics | ✅ | ❌ |

| Aligning Judges with Human Feedback | ✅ | ❌ |

| Multi-Turn Evaluation | ✅ | ❌ |

| Visualization & Comparison | ✅ | ❌ |

| Online Evaluation | ✅ | Arize AX (paid SaaS) only |

| Evaluation Library Integration | RAGAS, DeepEval, TruLens, Guardrails AI | Custom integrations only |

| License | Apache 2.0 | Elastic License 2.0 |

Architecture & Operation

MLflow's pluggable backend scales from a local SQLite file to a production cluster with various database options without changing code. Phoenix is optimized for local debugging and limits you to use PostgreSQL.

| Dimension | MLflow | Arize Phoenix |

|---|---|---|

| Primary Scope | End-to-end AI engineering platform | LLM observability and evaluation workbench |

| Core Data Plane | Tracking server + SQL backend + artifact store | Collector + UI + SQLite or PostgreSQL |

| Operational Focus | Unified platform for agents, LLMs, and ML models | Trace-centric debugging and iteration loop |

MLflow keeps tracing, evaluation, and model artifacts in one system. Phoenix covers trace inspection well, but teams typically need additional tools as their scope grows.

AI Gateway

As LLM applications move to production, teams face growing challenges around managing API keys, controlling costs, switching between providers, and enforcing governance policies. This is where an AI Gateway, a centralized layer between your applications and LLM providers, has become an essential piece of production AI infrastructure.

Arize Phoenix does not offer a gateway capability. To manage costs and model access, teams using Phoenix must bolt on a separate tool such as LiteLLM, PortKey, or build a custom gateway solution.

MLflow offers a built-in AI Gateway for governing LLM access across your organization. It provides a standard endpoint that routes requests to any supported provider (OpenAI, Anthropic, AWS Bedrock, Azure OpenAI, Google Gemini, and more), with built-in rate limiting, fallbacks, usage tracking, and credential management. Teams can switch providers, add guardrails, or enforce usage policies without changing application code.

Summary

Arize Phoenix is a solid observability tool, but tracing is only one piece of the puzzle. Its limited open source feature set and absence of an AI Gateway mean that teams inevitably need additional tools to build a complete AI engineering stack. Choose Phoenix if tracing and basic evaluation are all you need and you prefer a SaaS-first experience.

MLflow is a complete AI engineering platform. It covers tracing, production-grade evaluation, prompt optimization, an AI Gateway, and governance, all backed by the Linux Foundation with full open source feature parity. Choose MLflow if you need a vendor-neutral platform that goes beyond observability to help you actually improve and ship AI agents and LLM applications with confidence.